How to Read AI Metrics Like a Confident Veterinarian

A practical guide for veterinary professionals who want to understand AI validation data, not just trust it.

ABSTRACT

Vetology publishes 11 performance metrics for each of its 89+ veterinary radiology classifiers, built on a foundation of 300,000 multi-image patient cases. This article explains what each metric means in plain clinical language so veterinary professionals can interpret AI screening results with confidence. It covers sensitivity and specificity (how well the AI classifies cases), prevalence (how common a condition is in real-world practice), positive and negative predictive values (how reliable an individual prediction is once prevalence is factored in), confidence intervals, radiologist agreement rates, AUC, F1 score, and accuracy.

A key distinction: sensitivity and specificity evaluate model performance independent of prevalence, while PPV and NPV evaluate prediction reliability and are directly affected by how common a disease is. For rare conditions, a PPV that meaningfully exceeds the underlying prevalence indicates real predictive value. All metrics are published with full transparency at vetology.net/ai-classifier-performance.

Key terms: veterinary AI, classifier performance, sensitivity, specificity, positive predictive value (PPV), negative predictive value (NPV), prevalence, AUC, radiologist agreement rate, confusion matrix, veterinary radiology, AI validation, diagnostic AI screening

Why This Matters for Your Practice

We recently expanded our public AI performance dashboard from four metrics to eleven for each of our 89+ classifiers. That is a lot of numbers. And if you are like most veterinary professionals, you did not go to vet school to interpret ROC curves.

But these metrics directly affect how you use AI screening results in your clinical decisions. When an AI report flags cardiomegaly or rules out pleural effusion, the metrics behind that classifier tell you how much weight to give the result. Understanding a few key numbers can change how confidently you act on what the AI is telling you.

Here is what each metric means, in plain language, with real examples from our published data.

The Two Metrics You Probably Already Know

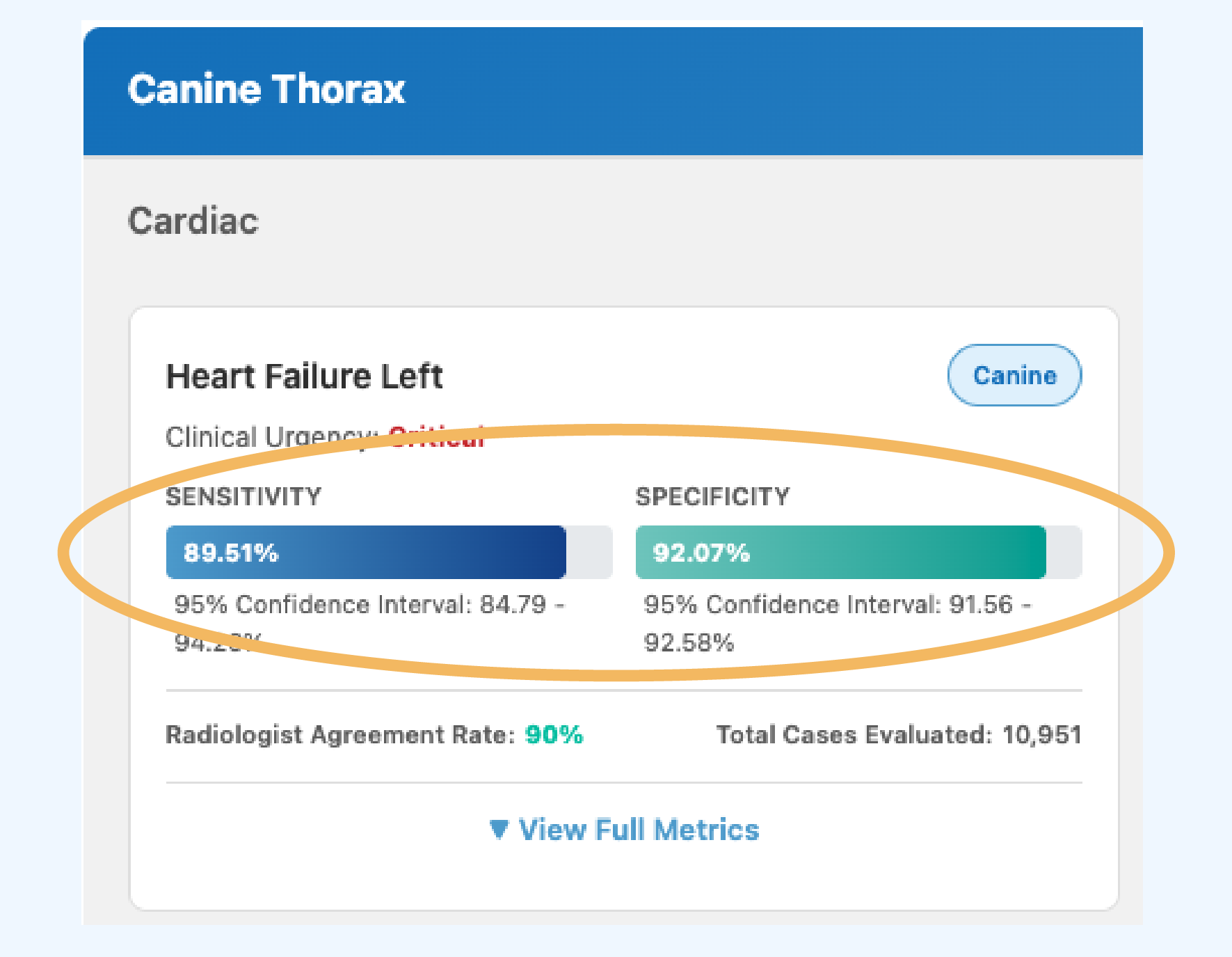

Sensitivity (the “catch rate”)

When the condition is present, how often does the AI detect it?

A sensitivity of 89.5% means the AI correctly identifies the condition in roughly 89 or 90 out of every 100 cases where it truly exists. The remaining cases are missed findings (false negatives).

What this means for you: Higher sensitivity means fewer missed findings. For conditions where early detection is critical, like heart failure, you want sensitivity to be as high as possible.

Specificity (the “all clear” rate)

When the condition is absent, how often does the AI correctly say so?

A specificity of 92.1% means that when there is no finding, the AI agrees 92 out of 100 times. The rest are false alarms (false positives).

What this means for you: Higher specificity means fewer unnecessary follow-ups. When the AI says “not present” and specificity is high, you can feel confident about that negative result.

Prevalence

How common is this condition in real-world practice?

We calculate prevalence from our clinical case database rather than the test set, so the number reflects actual clinical frequency. This tells you the baseline probability before the AI even looks at the image. A condition with 15% prevalence behaves very differently than one at 0.5%.

Why it matters here: Prevalence is essential for understanding the next two metrics, PPV and NPV. Without knowing how common a condition is, those numbers cannot be interpreted correctly.

REAL EXAMPLE

Our Heart Failure (Canine) classifier has 89.5% sensitivity and 92.1% specificity.

That means it catches about 9 out of 10 true heart failure cases, and when it says the heart looks normal, it is right about 92% of the time.

The Two Metrics That Answer Your Real Question

Sensitivity and specificity describe how the AI performs in controlled testing. But when you are looking at a patient’s results, the question you are actually asking is different: “The AI flagged this finding. Should I believe it?”

That is where PPV and NPV come in.

While Sensitivity and Specificity are metrics for evaluating what percentage of the time we expect a case to be classified correctly, Positive Predictive Value (PPV) and Negative Predictive Value (NPV) are metrics for evaluating what percentage of the time a prediction class is correct.

The biggest difference is that PPV and NPV metrics consider how prevalent a disease is, while Sensitivity and Specificity do not.

Sensitivity and Specificity are more useful for evaluating model performance, whereas PPV and NPV are more useful for interpreting model predictions.

Positive Predictive Value (PPV)

When the AI flags a finding, how often is it actually there?

PPV depends heavily on how common the condition is. A rare condition (low prevalence) will naturally have a lower PPV even with strong sensitivity and specificity, because most of the population does not have it.

We calculate PPV using real-world prevalence from our clinical case database so the number reflects what you would see in practice.

Negative Predictive Value (NPV)

When the AI says a finding is not present, how often is it right?

For most conditions, NPV is very high because most patients do not have any given condition.

An NPV of 99.9% means you can be extremely confident in a negative result. This is where AI screening is often strongest: helping you confidently rule things out.

REAL EXAMPLE

Our Heart Failure (Canine) classifier has 89.5% Sensitivity and 92.1% Specificity, with a PPV of 11.9% and an NPV of 99.9%. That looks lopsided, and it is supposed to.

Heart failure has a prevalence of about 1.2% in our clinical database. So when the AI flags it, there is roughly a 1 in 8 chance the condition is truly present, which is still a significant increase from the baseline 1 in 83 rate. A PPV notably higher than the underlying prevalence indicates the model is providing real predictive power beyond random guessing. When it says “no heart failure,” you can be very confident.

How much PPV is clinically useful is ultimately a question for clinicians, and should be an ongoing discussion point as we continue to retrain and improve our models.

The clinical takeaway: a positive flag for a rare condition is a signal to look more closely, not a diagnosis. A negative result is a strong reassurance.

The Metrics That Give You Context

95% Confidence Interval

How precise is the measurement?

A confidence interval of “85% – 93%” means the true sensitivity most likely falls within that range. Narrower intervals mean more cases were tested and the measurement is more precise.

Wider intervals (common for rarer conditions) mean fewer test cases were available.

We publish confidence intervals for both sensitivity and specificity so you can judge how much certainty is behind each number.

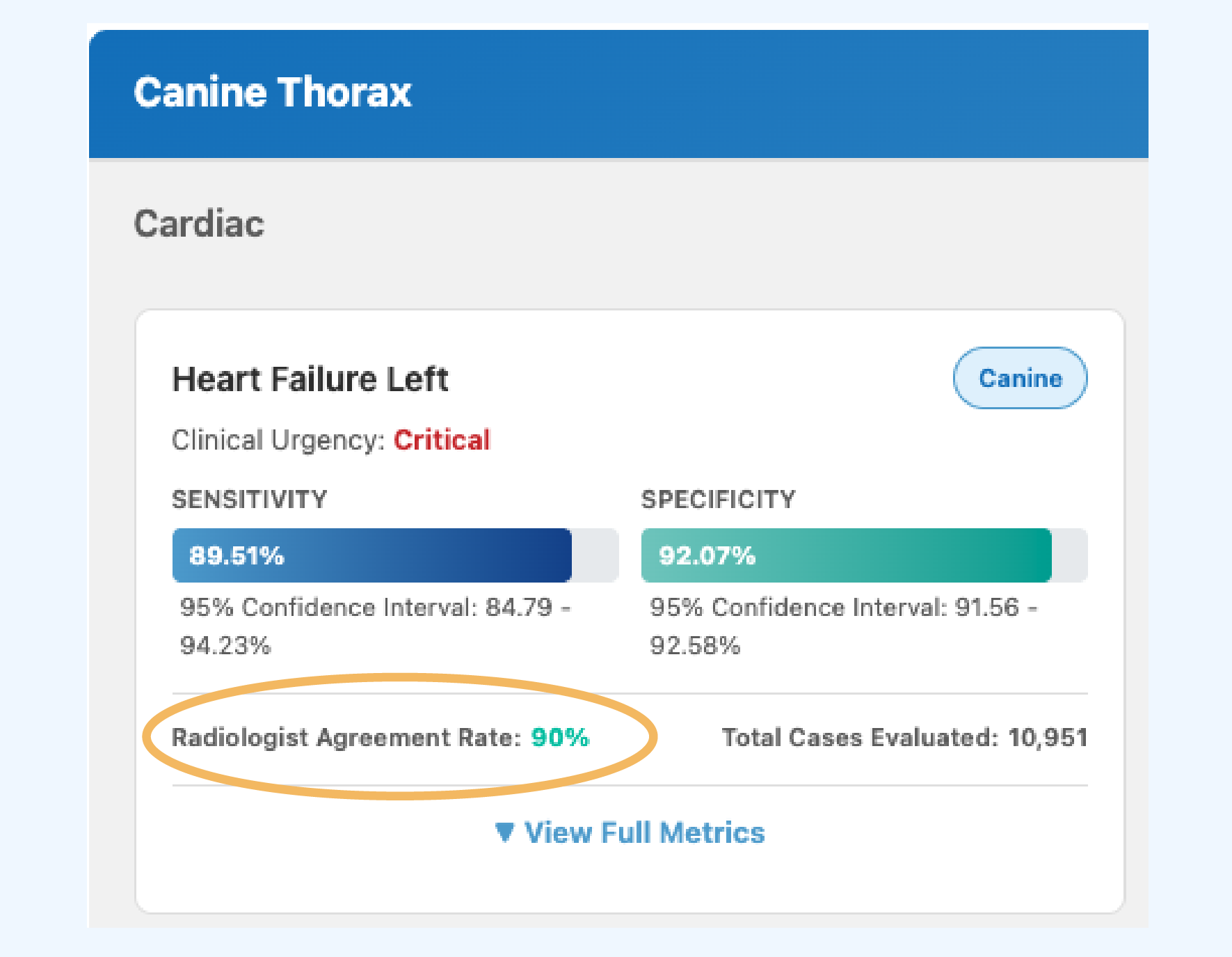

Radiologist Agreement Rate

How often do specialists agree with each other on this finding?

This might be the most important context metric on the dashboard. Some findings are straightforward and board-certified radiologists almost always agree; others are more subjective.

If specialists disagree 10-30% of the time on a given finding, an AI performing in that range is working within the natural variability of expert interpretation.

This number gives you a benchmark for what “good” means for each specific condition.

REAL EXAMPLE

Our Cardiomegaly (Canine) classifier has a Radiologist Agreement Rate of 93%. That means even board-certified radiologists disagree about 7% of the time on this finding.

The AI’s sensitivity of 75.6% and specificity of 86.3% should be understood in that context.

The Metrics for the Data-Curious

The remaining metrics are primarily used by data scientists and statisticians to evaluate classifier quality. They are published for completeness and for those who want the full picture.

AUC (Area Under the Curve)

How well does the classifier distinguish positive from negative overall?

A single number summarizing overall quality. 1.0 is perfect; 0.5 is no better than a coin flip. Values above 0.85 indicate strong performance.

Our Heart Failure classifier has an AUC of 0.95.

F1 Score

How well does the classifier balance catching findings with avoiding false alarms?

The harmonic mean of precision and recall. Useful for comparing classifiers where both false positives and false negatives matter.

Accuracy

What percentage of all cases did the AI get right?

This sounds like the most important number, but it can be misleading for rare conditions. If a condition has 1% prevalence, a system that always says “not present” would be 99% accurate while catching nothing. That is why we publish sensitivity and specificity alongside accuracy.

How to Use This in Your Practice

You do not need to memorize these metrics. But the next time you review an AI screening report, three quick checks can change how you use the results:

- Check the NPV for negative results. For most conditions, the NPV is 99%+. When the AI says “not present,” you can move on with confidence.

- Check the prevalence for positive flags. A positive flag on a rare condition (prevalence under 2%) is a signal to investigate further, not a confirmation. A positive flag on a common condition (prevalence above 10%) carries more weight.

- Check the Radiologist Agreement Rate for borderline calls. If specialists disagree 20% of the time on a finding, an AI result in the gray zone is reflecting genuine clinical ambiguity, not a system failure.

The full metrics for all 89+ classifiers are published at vetology.net/ai-classifier-performance. We publish them because informed trust is better than blind trust, and veterinary professionals deserve the data to make their own judgment calls.

View the complete AI performance dashboard

Sensitivity, specificity, PPV, NPV, confidence intervals, and Radiologist Agreement Rate for every classifier we validate.