Every Image Screened, Not Just the Ones You Choose to Send

ARTICLE ABSTRACT

This article walks through how automated AI screening complements teleradiology in a real practice workflow: the veterinarian reads the film, the AI corroborates what they saw and surfaces incidental findings, and teleradiology adds a board-certified or board-eligible specialist’s depth on the cases that genuinely warrant one.

The result is in-appointment answers for the pet owner, fewer next-day callbacks, and more focused telerad referrals on the cases that actually need a specialist’s interpretation.

How automatic AI screening adds comprehensive coverage without changing how you practice

Teleradiology is a trusted and valuable part of veterinary practice. When a case calls for a board-certified radiologist’s interpretation, the ability to send images and receive a detailed report has improved patient care across the profession. Most practices have a teleradiology provider they rely on, and that relationship matters.

Vetology’s AI screening serves a different purpose. It is not a replacement for teleradiology, it is a complement that adds something teleradiology was never designed to do: screen every image, automatically, before anyone has to decide which cases need a specialist read.

The two services work hand in hand. AI screening provides the always-on baseline that catches findings on every study. Teleradiology provides a board-certified or board-eligible radiologist’s interpretation on the cases where a specialist’s depth adds the most.

The Difference Between Selective and Comprehensive

Teleradiology works on a case-by-case basis. The veterinarian evaluates the images, identifies cases that would benefit from specialist input, and submits those for review. This is exactly how the service is designed to work, and it works well.

AI screening works differently. When a practice uses Vetology, every radiograph submitted through the workflow is automatically analyzed across 91+ classifiers covering conditions in canine and feline thorax, abdomen, and spine/musculoskeletal categories. There is no selection step. The screening happens on every study, validated on 300,000 board-certified veterinary radiologist-reviewed cases with published sensitivity and specificity for every condition.

This means the AI is reviewing images that the DVM may already feel confident about. Here’s where the added value shows up: A thorax taken to evaluate a cough also gets screened for cardiac changes, lymphadenopathy, and spinal findings. An abdomen taken for GI signs is screened for organ size changes, mineralization, and structural abnormalities. The AI adds breadth to every study, regardless of the original clinical question.

What This Looks Like at 2 PM on a Tuesday

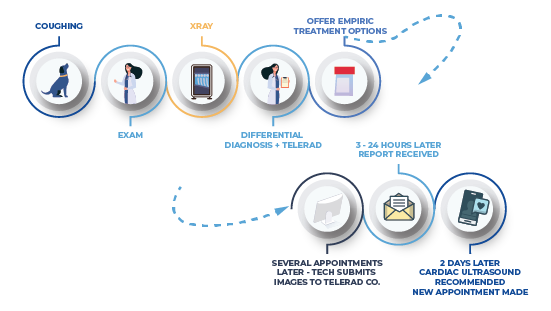

Standard Teleradiology-Only Workflow

A Labrador presents for a persistent cough. You take thoracic radiographs. With teleradiology, you submit the images, move the client out, and call tomorrow with results. The pet parent goes home without answers, and you add another callback to tomorrow’s list.

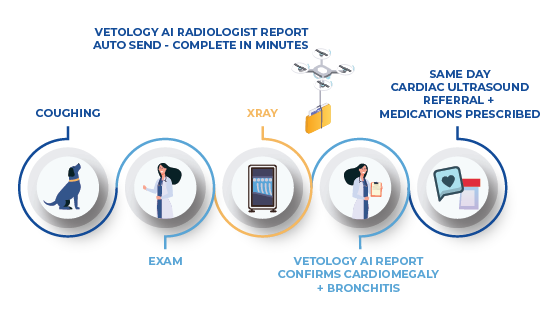

Imaging Workflow with AI in the Mix

With AI screening, the report is available within minutes, while the client is still in the room. The AI flags a bronchial pattern consistent with your clinical suspicion, but it also notes early left atrial enlargement and mild thoracic lymphadenopathy.

You now have a more complete picture to discuss with the client before they leave. If the lymphadenopathy reading prompts a closer look, you can submit that single case for a teleradiology read knowing exactly why you are asking for one.

The AI surfaces the question; the teleradiologist provides the credentialed answer. When you recommend a specialist read to a pet owner, the recommendation is grounded in something specific you saw on the image.

For some veterinarians, that in-appointment information is exactly what they want. Clients leave with clarity and a treatment plan instead of waiting overnight.

For others, the value is more analytical: five fewer callbacks per day at 8 to 10 minutes each adds up to 40 to 50 minutes of recovered time. That is nearly one additional appointment, or time back in a day that already feels too short. The per-case cost of AI screening on an unlimited subscription is a fraction of a teleradiology read, and the information arrives while it can still shape the visit.

Comprehensive Screening in Practice

In practical terms, AI screening means:

- A thorax submitted for cardiac evaluation is also screened for pulmonary patterns, pleural findings, mediastinal changes, and thoracic spine conditions.

- An abdomen submitted for vomiting is also screened for organ size, masses, mineralization, and structural findings across all visible organs.

- A spine study is also screened for degenerative changes, congenital anomalies, and adjacent soft tissue findings.

The AI presents its findings in a structured report alongside the veterinarian’s own assessment. Some findings will confirm what the doctor already noted. Others may highlight something worth a closer look. The veterinarian always makes the final clinical decision.

This is not about replacing clinical judgment. It is about having a consistent, validated screening layer that catches incidental findings the same way every time, whether it is 9 AM or 5:45 PM on a Friday. When something on the screen warrants a specialist’s interpretation, the AI report helps the veterinarian put a focused clinical question on the teleradiology submission. Instead of “please read these films,” the question becomes “please assess thoracic lymphadenopathy and confirm cardiac silhouette.” The radiologist still reads with fresh eyes, on their own, but the clinician’s question is sharper. That sharper question is what makes the report more useful when it comes back.

How the Workflow Plays Out

You read the radiograph the way you always have. The AI report arrives on the same case within minutes, and you check it against what you saw. Most of the time, the AI confirms your read and may add a few findings you were not specifically looking for. That confirmation is the value. You move to diagnosis and treatment planning with one more layer of corroboration behind your decision, and the structured AI report becomes fast and familiar to read over time.

Some cases do not resolve that easily. The findings are subtle, the clinical picture is unclear, or you and the AI together still cannot get to a confident diagnosis or treatment plan. That is where teleradiology adds a layer of human specialist support. The case goes to a board-certified or board-eligible radiologist who can give the read the depth it needs.

Over time, the rhythm becomes natural. You read, the AI corroborates, and you decide whether you have what you need or whether the case warrants a specialist’s interpretation. The AI is the helper that confirms what you already saw. The teleradiologist is the human specialist you turn to when confirmation alone is not enough.

How the Whole Practice Benefits

Practice managers benefit from more complete initial visits. When more information is available during the appointment, more treatment decisions happen while the client is in the room. This means smoother scheduling, fewer follow-up calls to coordinate, and better client retention. Clients who leave with answers are more likely to follow through on treatment plans and return for follow-ups.

Veterinary technicians gain a new dimension to their work. Techs who capture quality radiographs can see the AI’s findings on the images they produced. Over time, this builds familiarity with a broader range of imaging findings and adds professional development value to the imaging workflow.

Front desk and client services staff benefit from the downstream effect: when appointments are more complete, there are fewer follow-up calls to manage and fewer schedule adjustments to coordinate. The day runs more predictably for everyone.

AI Screening and Teleradiology Work Together

These services answer different clinical questions, and knowing when to use each is part of running an efficient imaging workflow.

Lean on AI screening when:

- You want a baseline read on every study, including the ones you already feel confident about

- The pet parent is in the room and a same-visit conversation matters

- You want a structured screen for incidental findings outside the original clinical question

- You want to triage which cases in a busy day actually warrant a specialist’s time

Lean on teleradiology when:

- The case is complex, the findings are subtle, or the clinical stakes are high

- You want a board-certified or board-eligible radiologist’s interpretation on the medical record

- The owner is asking for a specialist opinion before committing to next steps

- You want a credentialed reading you can cite to a referring practice or in a follow-up conversation

In practice, the workflow is straightforward. The AI screens every case automatically. The veterinarian reviews the AI report alongside their own assessment of the films. When something warrants a specialist’s interpretation, the case goes to teleradiology with a sharper clinical question on the submission. The radiologist reads with fresh eyes, the way they always have. The clinician gets a credentialed reading on the cases that need one. The pet parent gets answers in the room when answers are available, and a thorough specialist review when one is appropriate.

Vetology offers both AI screening and teleradiology read by board-certified and board-eligible radiologists, including DACVR/ECVDI diplomates, board-certified cardiologists, and a board-certified dentist. STAT reads return in 2 hours; routine reads in 24. The AI subscription also works alongside whatever teleradiology provider a practice currently uses, so clinics do not have to choose between them.

Simple, Predictable Pricing

Vetology’s AI screening subscription is $200/month for unlimited studies. Not per-case, not tiered by volume. A flat monthly cost that covers every radiograph the practice submits, whether that is a handful per week or dozens per day. No contracts, no PACS required, and free DICOM storage included.

The AI subscription is the practice’s recurring imaging cost. Teleradiology fees, which start at $86 per single-region report, are billed to the pet owner on the cases the veterinarian sends for a specialist read.

The flat AI cost gives the clinic broad coverage on every study; the per-case telerad fee gives the pet owner a credentialed read on the cases that warrant one. Together they make for a more intentional imaging workflow.

The system integrates with widely used practice management systems including DaySmart Vet, ezyVet, VetRocket, ScribbleVet, and CoVet with more on the way.