Interpreting Classifier Results: A First Look at Data Science Metrics

What sensitivity, specificity, radiologist agreement rate, and test cases actually tell you about AI diagnostic performance

Written by – Benjamin Cote, Data Scientist | Vetology

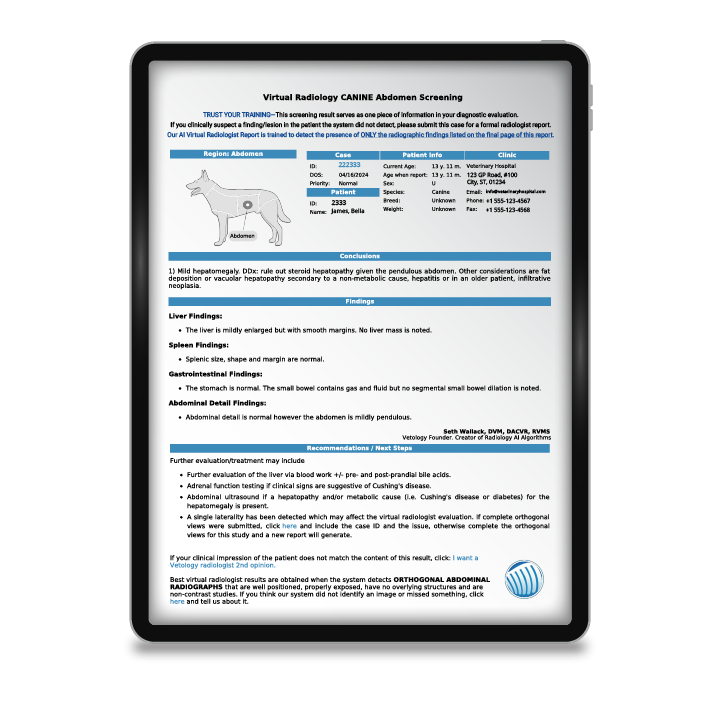

As part of Vetology’s push to be transparent about our AI products, we recently published all of our condition classifiers on our website (find them here if you haven’t taken a look yet: AI Classifier Performance). Since we want you to be able to see how each of our models performs and draw your own conclusions, this article is designed to provide you with some extra knowledge and context to interpret our metrics.

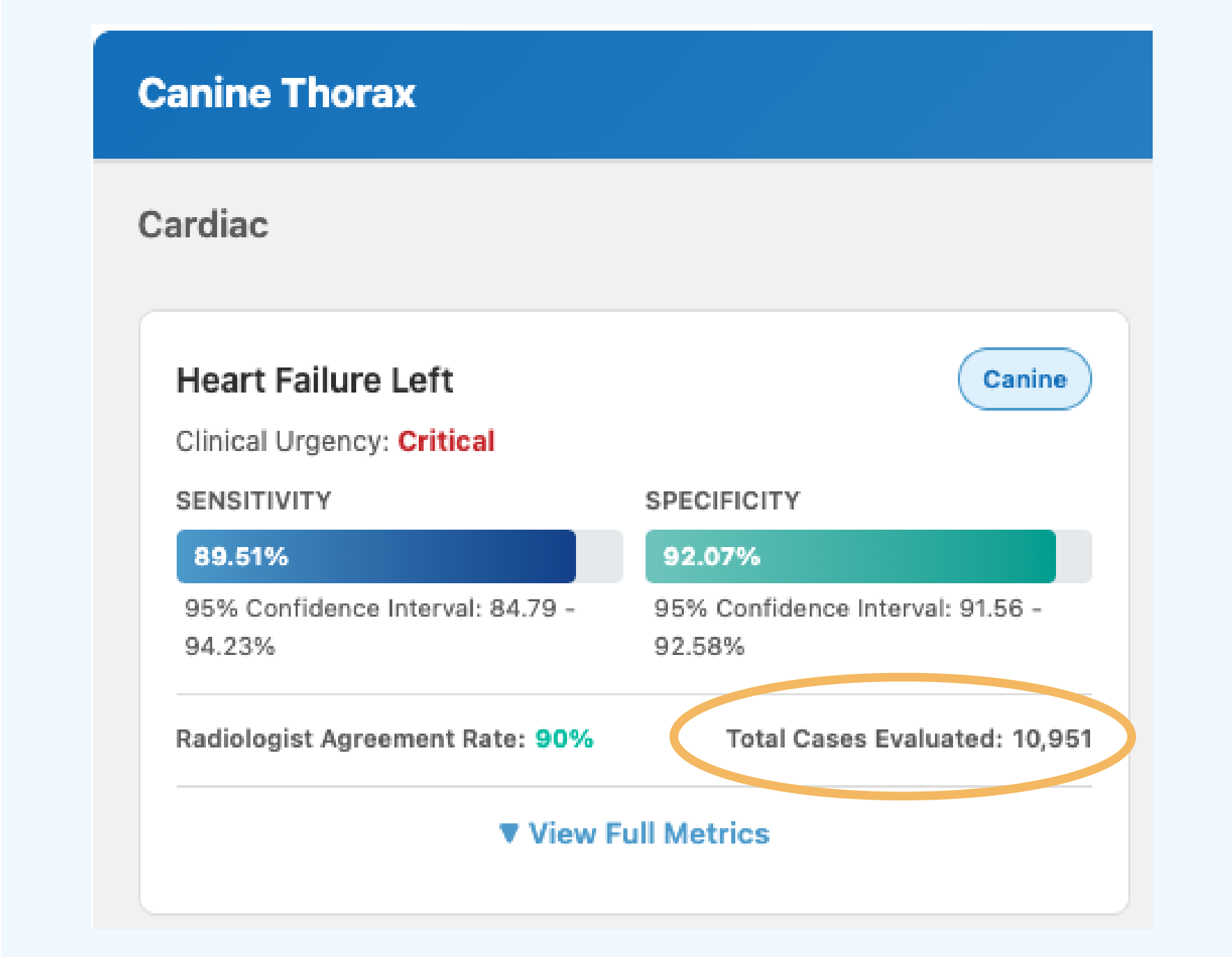

On the AI Condition Classifier Performance Metrics page, we include several key metrics on each of our conditions. For the purposes of this article, we will focus on: Sensitivity, Specificity, Radiologist Agreement Rate, and Number of Test Cases. Each measure is a piece of the classifier puzzle, and by understanding the ways they interact, you can see the bigger picture come together. We’ll cover additional metrics in future articles.

Sensitivity and Specificity

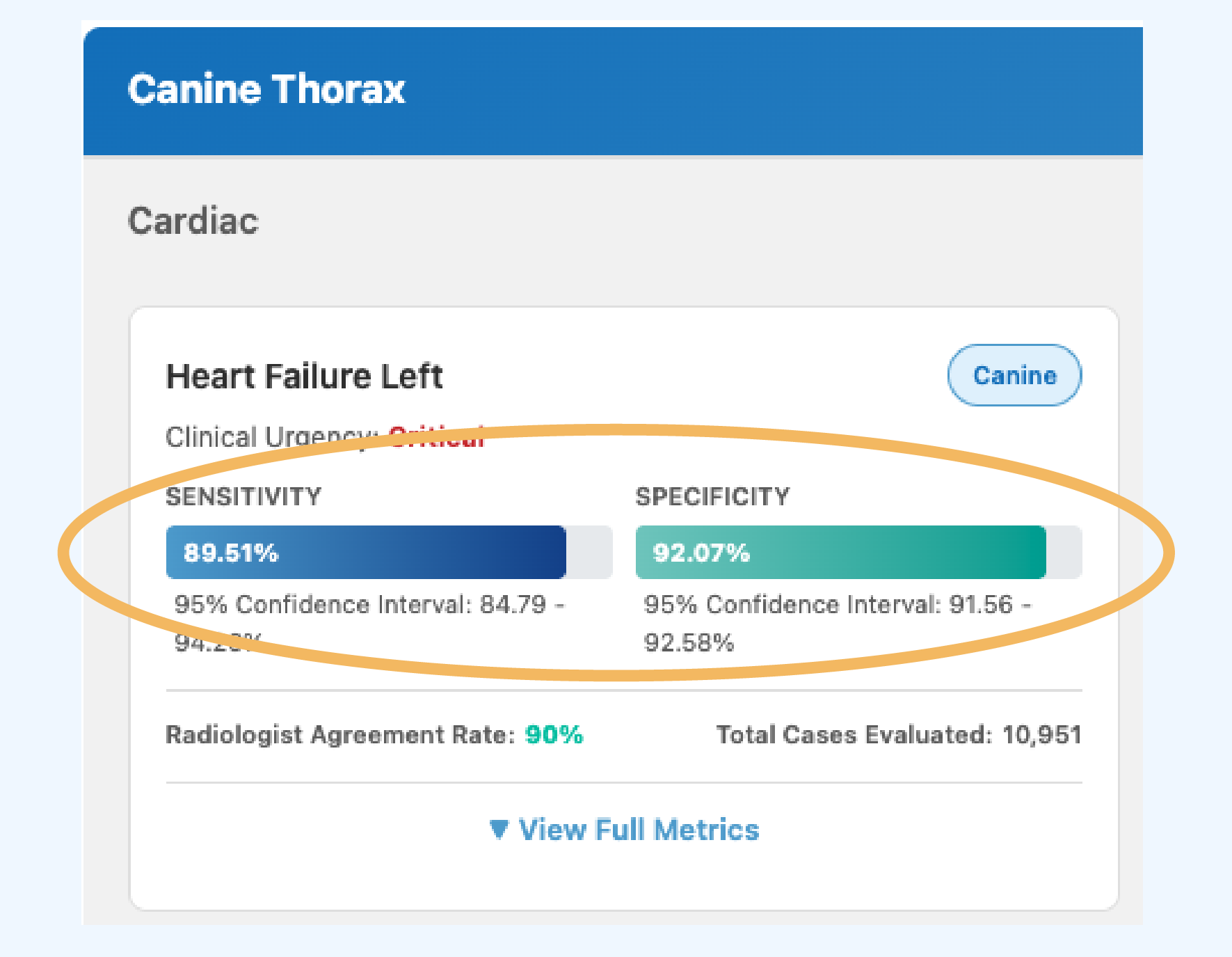

Front and center on each published classifier, you can see the Sensitivity and Specificity scores achieved by that model. These are both common metrics used in data science to measure model performance, and at Vetology they are the primary way we determine if a model is strong enough to be released.

They can be thought of as a pair, each capturing the same information but on different classes of data. You can think of them as a see-saw: A model that predicts every case as positive would have 100% Sensitivity (but 0% Specificity), and a model that predicts every case as negative would have 100% Specificity (but 0% Sensitivity), and neither would be useful. We want to get Sensitivity and Specificity as high as possible, so the challenge is how to get each metric to improve without harming the other.

What is Sensitivity?

Sensitivity (True Positive Rate)

Sensitivity is a measure of how often our model correctly recognizes that a disease is present in the patient. It answers the question: “When I get a Positive prediction, how often is the case actually Positive?”

When Sensitivity is high, the model correctly recognizes what a given disease looks like. It’s as if the model is telling us: “I know what heart failure looks like, and that is heart failure.”

One way to improve Sensitivity is by training the model on more examples that are positive for the condition so it understands the variation within a disease across many different breeds, and sizes.

What is Specificity?

Specificity (True Negative Rate)

Specificity is a measure of how often our model correctly determines that a disease is absent. It answers the question: “When I get a Negative prediction, how often is the case actually Negative?”

When Specificity is high, the model can correctly distinguish between a given disease and all other diseases, as if the model is telling us: “I don’t know what that is, but I know that is not an example of heart failure.”

One way to improve Specificity is by training the model on more images that are negative for the condition so it understands what kinds of information are unrelated to this disease. For instance, if we are trying to identify pulmonary nodules, the size and shape of the heart are unlikely to help us make our diagnosis. Instead, we want enough data that our classifier can isolate findings related to pulmonary nodules and ignore irrelevant visual information. That way when key findings aren’t present, the classifier will confidently predict that a disease isn’t present.

What Can You Learn from These Metrics?

When viewed together, you can get an estimate of how well a model performs when predicting on Positives (Sensitivity) and Negatives (Specificity). However, when forced to choose between prioritizing model Sensitivity or Specificity, we tend to prioritize Specificity. This is because our models are trained on many more negative images than positives.

We train on mismatched proportions because even the most common diseases only occur in a small percentage of cases; this imbalance ensures that we don’t over-predict the presence of diseases. A consequence of this is that Sensitivity and Specificity percentages are calculated on differently-sized classes, and a 1% increase in Specificity usually means a greater increase in total model accuracy than a 1% increase in Sensitivity.

The math behind these metrics is not especially complicated, but there are some nuances that require more context. If you want to learn more about how we calculate Sensitivity and Specificity, look at the In-Depth Calculation section at the end of this article.

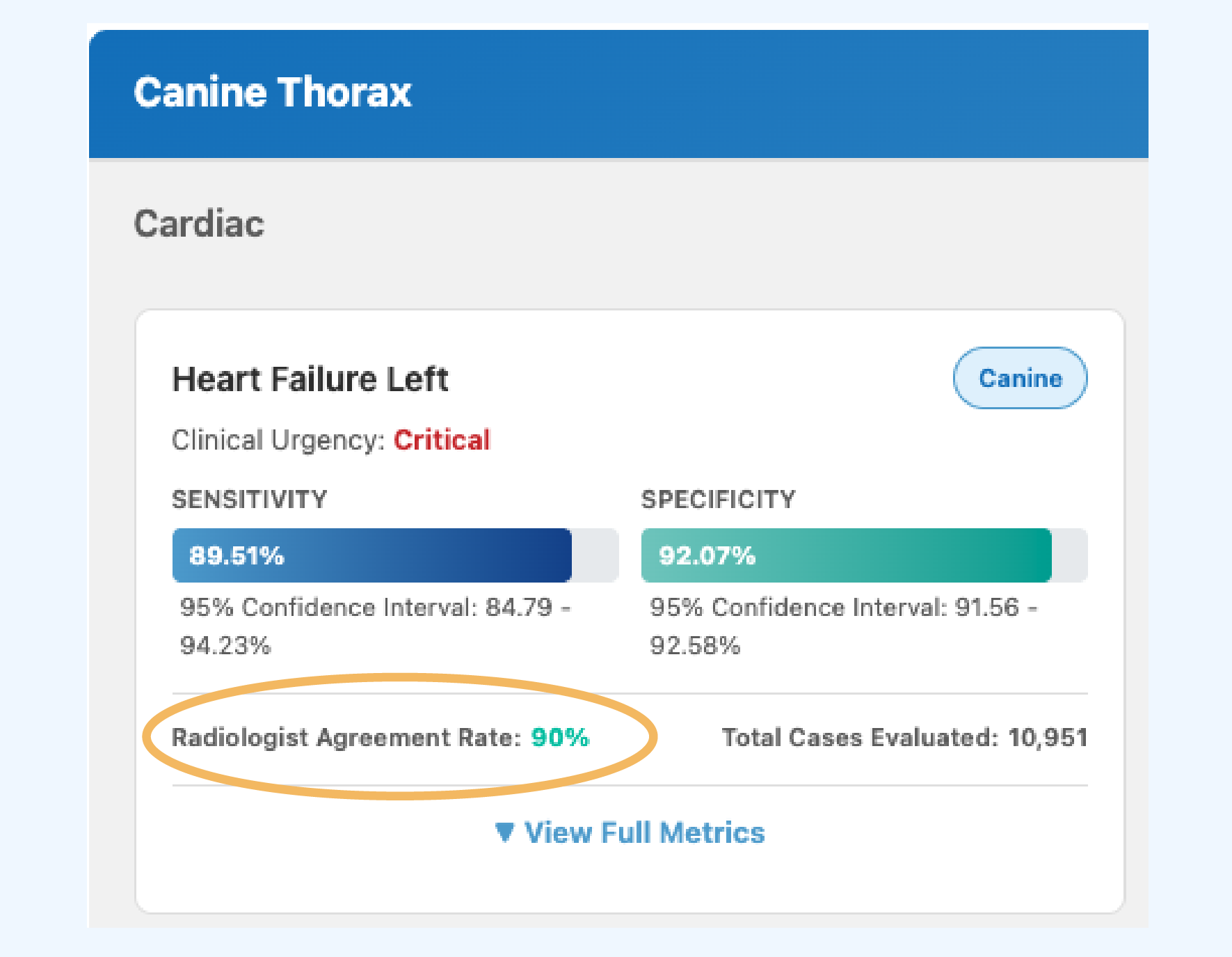

Radiologist Agreement Rate

Radiologist Agreement Rate

The percentage of cases where two US Board Certified Veterinary Radiologists produce the same label (Positive or Negative) on an image. This serves as a real-world benchmark for evaluating AI performance.

We calculate the Radiologist Agreement Rate by comparing the labels that expert radiologists provide on a blind set of shared images. Among this set of Positive and Negative images, we calculate the number of cases the radiologists agreed on out of the total number of cases they reviewed. Regardless of whether they agreed a case is Negative or Positive, so long as the radiologists make the same decision on an image we consider it an agreement.

Interpreting Radiographs is as much an art as it is a science! Some conditions can be easily diagnosed from radiograph findings, others cannot be. Some conditions are easily visible on a radiograph, others are not. Some conditions look similar to each other, others are completely unique! All that to say, it’s understandable why two expert radiologists may disagree when diagnosing the same patient. It also stands to reason that if a condition is hard for an expert radiologist to interpret from radiograph scans, our classifier may also have trouble consistently identifying a disease.

What Can You Learn from Radiologist Agreement Rate?

Low Agreement Rate

If the radiologist agreement rate is low, this means a condition is hard for radiologists to reliably diagnose. This is a place where our models often shine.

- With extremely rare conditions, a clinician or radiologist may encounter it only a handful of times over the course of their career.

- In contrast, our models are trained on hundreds or thousands of examples, so our sensitivity and specificity metrics can often surpass radiologist agreement rates.

- Through the aggregation of clinical examples globally, these models can help you feel confident in recognizing rare findings.

- Other times, agreement rate is low because a disease is hard for radiologists to determine visually.

- Our models may struggle with these conditions too. Sometimes they can pick up on patterns too minuscule for the human eye to see, but other times it’s just as hard for the neural network to come to a conclusion.

- When this is the case, our models may have low sensitivity and specificity scores that mirror low radiologist agreement rate.

High Agreement Rate

If the radiologist agreement rate is high, it means that this condition is easier for radiologists to reliably diagnose.

- This could be because the disease presents consistently on radiographs, because it is easy to identify, or because a particular finding unambiguously indicates that disease.

- When the agreement rate is high, model performance also tends to be high because the neural network is picking up on the same visual patterns as the radiologists.

- However, you’ll notice that some model performance metrics don’t match their high radiologist agreement rate.

- This is something we take seriously—we want every model to perform just as well if not better than the agreement rate so you can be confident in our predictions.

TRANSPARENCY NOTE

When you see conditions published with scores that are below the agreement rate, you can be confident that we are working to retrain a higher-performing model. Sometimes we will release a model below agreement rate because clinics have specifically requested it, and we feel confident that it has strong performance even if it is not as high as we would like. Other times, we are limited by low Positive case counts and have trained the highest-performing model we can at the time of publication. The decision usually comes down to whether it’s a high-priority condition or not.

Total Cases Evaluated

Total Test Cases

The number of unique patient cases used to evaluate a classifier and generate Sensitivity and Specificity metrics. This includes both Positive cases (disease present) and Negative cases (disease absent, which may include other conditions).

The number of evaluated cases shows the number of unique cases we used to test that particular classifier and generate our Sensitivity and Specificity metrics.

For example, the Canine Thorax condition Heart Failure Left has 10,951 total test cases, which means our performance metrics come from generating model predictions on 10,951 unique sets of radiographs, all from different dogs.

This number includes both the Positive cases where a disease is present, and the Negative cases. However, just because a case is labeled as Negative, that doesn’t always mean the animal is healthy – in fact, we make sure our set of Negative examples includes cases with a variety of other findings or diseases within the body region, just one of which is a “healthy” finding.

What Can You Learn from Test Case Counts?

As the number of test cases grows, so does the variation in examples our model is tested against. Each case introduces a unique combination of animal size, age, scan quality, and number of diseases present or absent. When a condition is tested on large quantities of data and has high Sensitivity and Specificity performance, you can feel certain that the model is robust enough to find the disease in animals of any size; it can handle any curveball case you throw at it.

An In-Depth Look: Sensitivity and Specificity Calculation

In data science, we often categorize our data by multiple labels at the same time. This can easily lead to confusion, which is why we describe outcomes using terminology like True Positive, True Negative, False Positive, and False Negative.

The table below shows the difference between each label. In short:

- A case is True if the predicted label matches the actual label, and False if the predicted label does not match the actual label.

- For example, if a model predicts that cardiomegaly is present in an image but a radiologist has determined that cardiomegaly is not present, we would call that classification a False Positive because the classifier falsely predicted cardiomegaly to be positive.

| Condition Is Present | Condition Is Absent | |

|---|---|---|

| Model Predicts Condition as Present | True Positive (TP) | False Positive (FP) |

| Model Predicts Condition as Absent | False Negative (FN) | True Negative (TN) |

Sensitivity

True Positives ÷ (True Positives + False Negatives)

Specificity

True Negatives ÷ (True Negatives + False Positives)

Why Specificity Improvements Have a Bigger Impact

Earlier in this article, I explained that we try to prioritize model Specificity over Sensitivity if we can no longer actively improve both metrics. Let’s explore why that is by walking through a short example.

Imagine we have a dataset with 500 Positives, 5,000 Negatives, and the model has 85% Sensitivity and 85% Specificity:

| Positive Cases | Negative Cases | Total Cases | |

|---|---|---|---|

| Total | 500 | 5,000 | 5,500 |

| Predicted Correctly | 425 | 4,250 | 4,675 |

| Predicted Incorrectly | 75 | 750 | 825 |

| Metric Score | 85% Sensitivity | 85% Specificity | 85% Accuracy |

Based on the number of Positive cases, the model correctly predicted the disease on 425 cases and only misclassified 75 cases -pretty good! But 85% Specificity on 5,000 Negative cases means that 4,250 cases were predicted correctly as normal, and 750 cases were misclassified. While the scores are the same, they represent very different numbers of misclassified images.

Let’s look at what happens to model accuracy if we improve either Sensitivity or Specificity by 10% without changing the other metric’s score:

| Positive Cases | Negative Cases | Total Cases | |

|---|---|---|---|

| Total | 500 | 5,000 | 5,500 |

| Predicted Correctly | 475 (+50) | 4,250 | 4,725 (+50) |

| Predicted Incorrectly | 25 (-50) | 750 | 775 (-50) |

| Metric Score | 95% Sensitivity (+10%) | 85% Specificity | 85.9% Accuracy (+0.9%) |

| Positive Cases | Negative Cases | Total Cases | |

|---|---|---|---|

| Total | 500 | 5,000 | 5,500 |

| Predicted Correctly | 425 | 4,750 (+500) | 5,175 (+500) |

| Predicted Incorrectly | 75 | 250 (-500) | 325 (-500) |

| Metric Score | 85% Sensitivity | 95% Specificity (+10%) | 94.1% Accuracy (+9.1%) |

KEY TAKEAWAY

A 10% increase in Sensitivity improves overall accuracy by 0.9%, while a 10% increase in Specificity improves overall accuracy by 9.1%. When there are so many more Negative cases than Positive cases, an equivalent increase in percentage does not equate to an equivalent increase in model accuracy.

Conclusion

Assessing the performance of a disease classifier can be tricky. Sometimes it’s unclear what a metric represents, or how to compare across models. It can also be difficult to interpret when a classifier is performing well because you have to consider not only its Sensitivity and Specificity scores, but also the Radiologist Agreement Rate.

If Sensitivity and Specificity are both around 70% and the Radiologist Agreement Rate is 63%, then it’s a strong model that can pick up on details that even expert radiologists may not see. However, if a model with those same scores had a Radiologist Agreement Rate of 85%, then the model would be significantly underperforming. Everything is relative, and at Vetology we have to consider how all our metrics interact before we publish new condition classifiers.

Now that you have an idea of what these metrics mean, take a look at our classifier results. Transparency means you can be part of this process. Notice the great work we’ve done, but also notice the areas we need to work on. With our monthly bundle releases, we are constantly increasing performance of existing models and adding coverage through new disease classifiers. So please, check back in soon and see where we’ve made our latest improvements.