Vetology Strengthens Leadership Team with New Director of Sales

FOR IMMEDIATE RELEASE

Veterinary commercial leader Pierre D'Amours joins growing team as Vetology expands board-certified radiologist services and AI diagnostic platform

March 16, 2026 – SAN DIEGO, CA – Vetology, a provider of AI-assisted radiology and board-certified teleradiology services for veterinary practices, today announced the addition of Pierre D’Amours as Director of Sales. The newly created role reflects the company’s growth trajectory.

President Eric Goldman, who has led Vetology’s commercial efforts since founding the company, and D’Amours will partner closely to build a sales organization that brings Vetology’s services to more veterinary practices across North America and internationally.

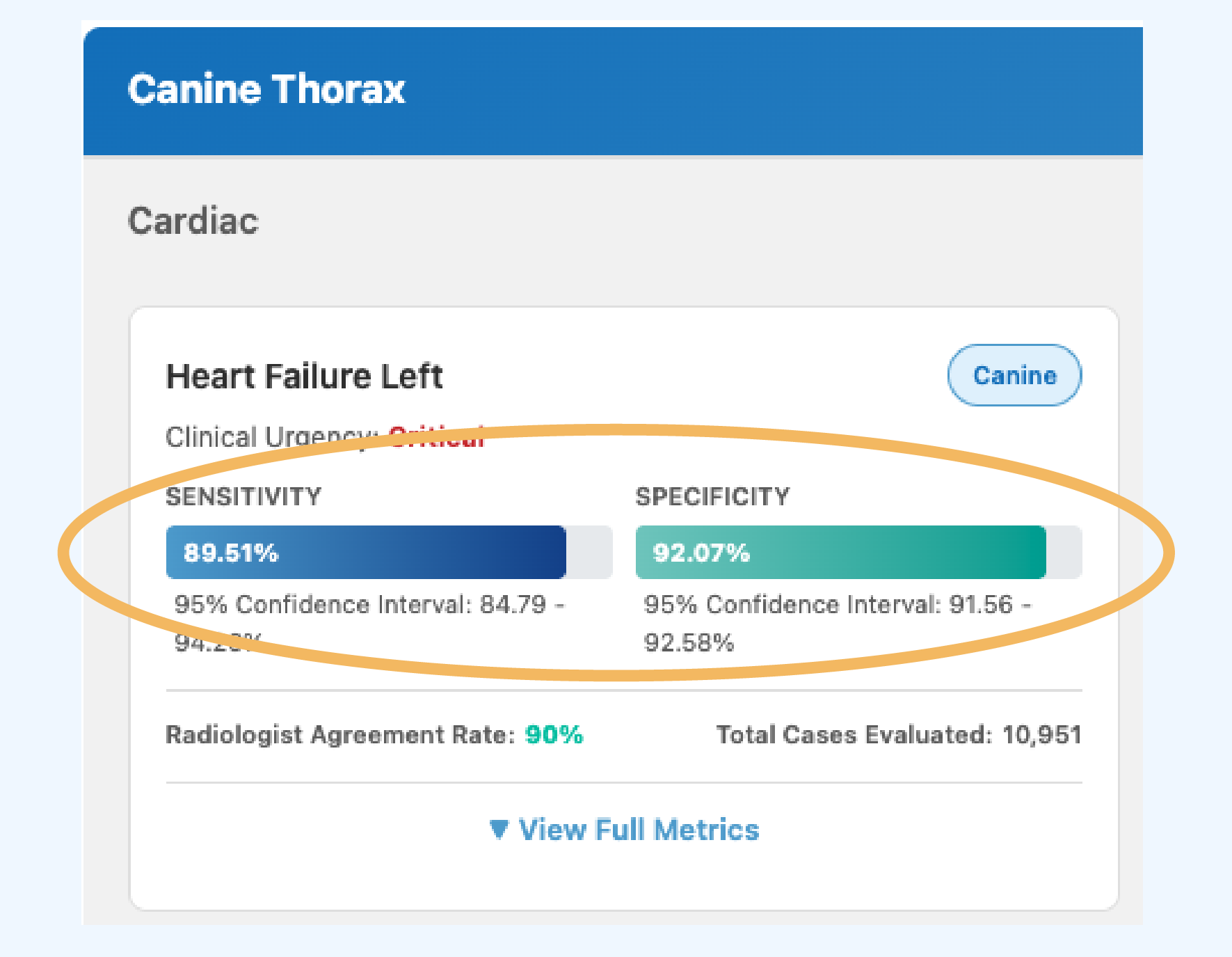

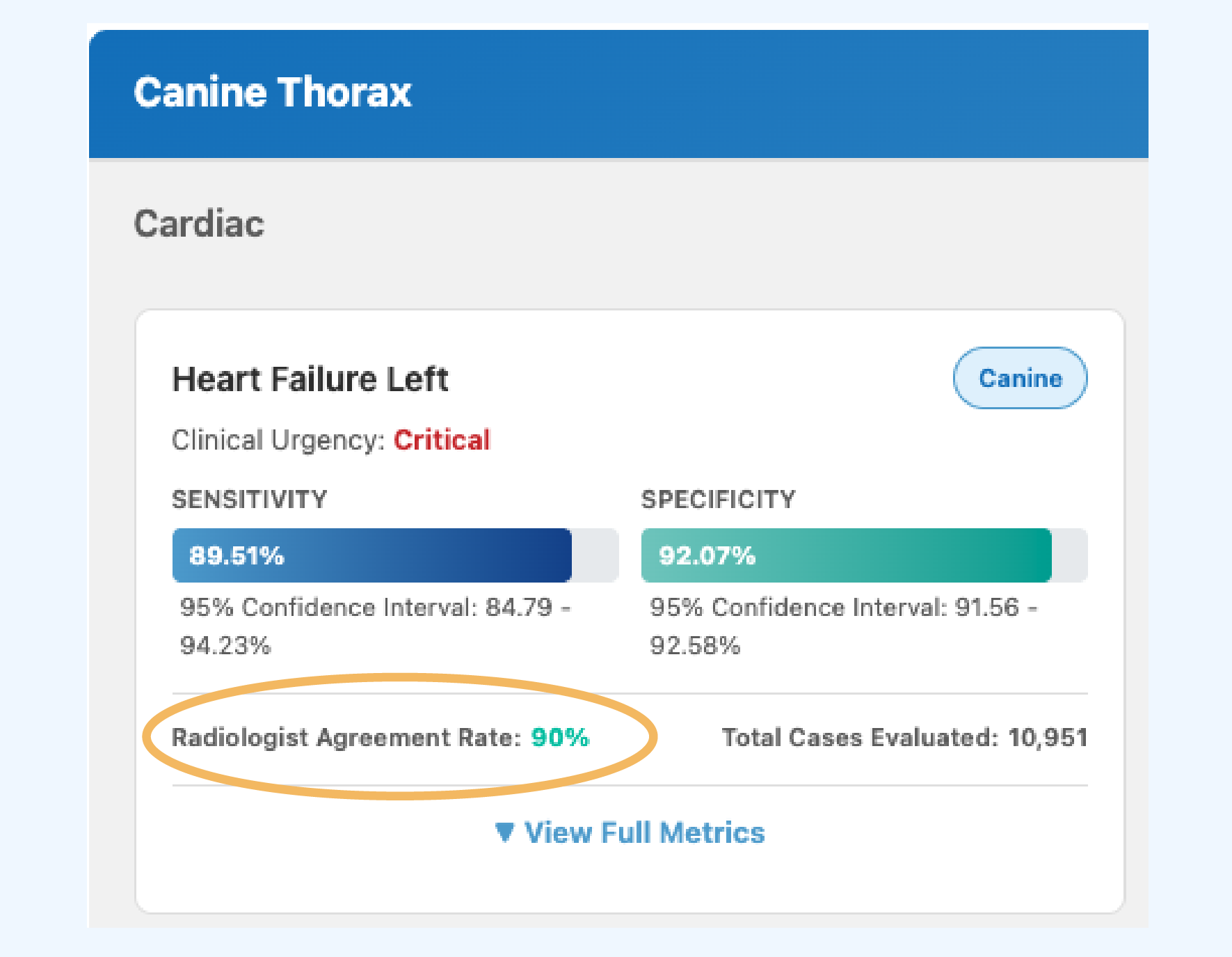

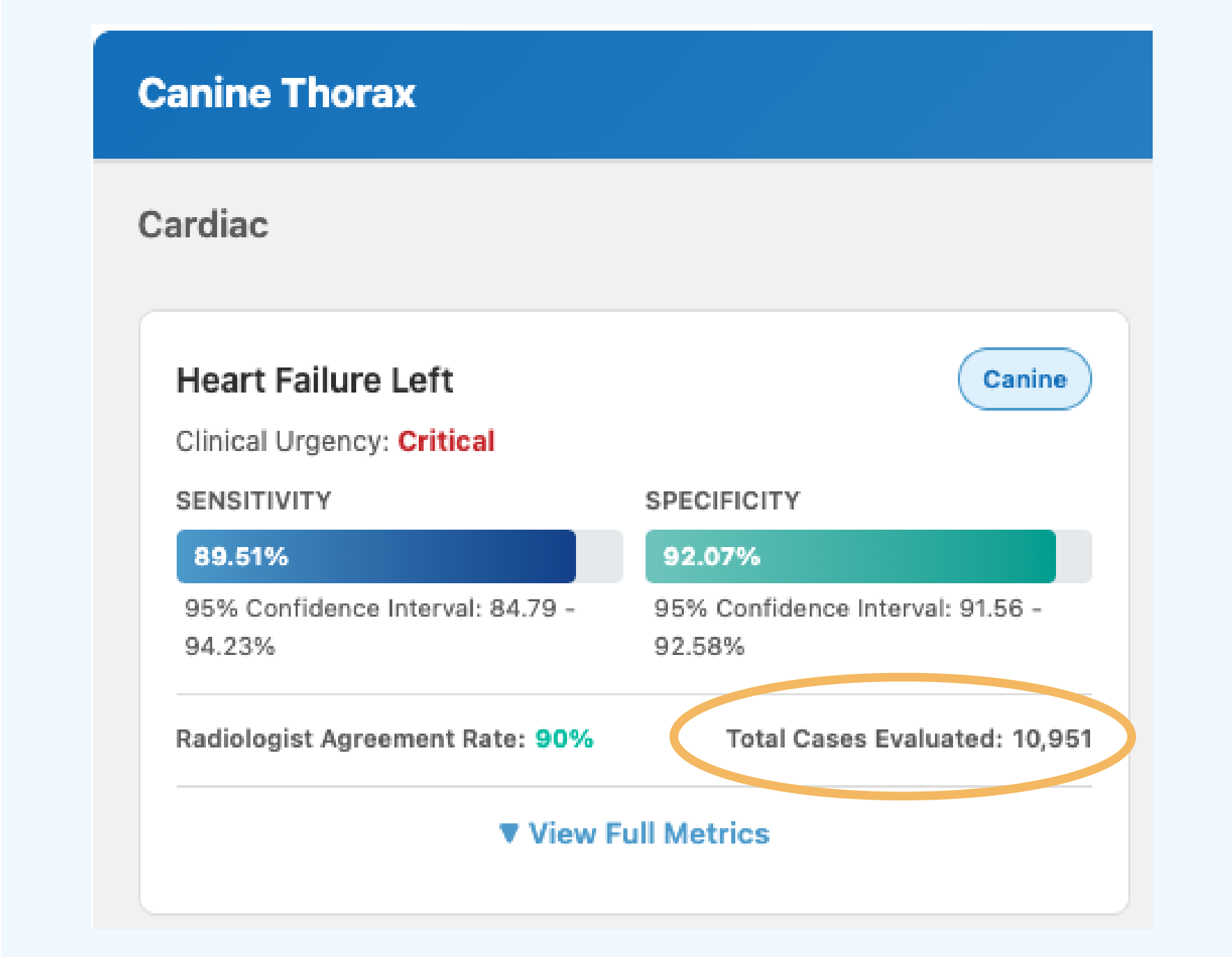

Vetology’s platform now includes 94+ feline and canine AI classifiers that screen radiographs for conditions across thorax, abdomen, spine, and musculoskeletal studies, with new classifiers releasing monthly and all performance metrics published publicly. The company also provides on-demand access to board-certified veterinary radiologists for specialist-level interpretation. As the platform and radiologist team grow, Vetology is investing in the commercial infrastructure to match.

We started Vetology to close the gap between the number of practices that need diagnostic imaging expertise and the number of board-certified radiologists available to provide it. AI was the solution — a way to give every practice access to consistent, validated screening regardless of where they are and when they need it. We paired that with our own team of board-certified radiologists so practices have both. I’ve been having this conversation with practices since day one. Pierre has the industry relationships and credibility to help us bring Vetology’s service and solutions to more practices, and I’m excited to work alongside him.

Eric Goldman, President, Vetology

An Industry Insider

D’Amours brings seven years of veterinary commercial experience as Vice President of North America Sales & Operations at Movora (Vimian Group AB), where he ran a $70M+ veterinary medical devices and SaaS business. He is fluent in English and French, holds a Bachelor of Commerce from Concordia University, and has deep relationships across veterinary practices, distributors, and corporate groups throughout North America.

Pierre understands the challenges inherent in running a veterinary practice and how the right technology can solve real problems in day-to-day operations. At Vetology he will work with veterinary doctors and management teams to make sure that we are delivering on our promises both during and after the sale.

When I evaluated Vetology, what stood out was a company that had done the hard work first — building the AI, hiring board-certified radiologists, validating the classifiers, and publishing all of it for the industry to review. That kind of transparency is rare in this space. I’ve spent years working with veterinary practices, and the right technology should solve real operational problems, not add complexity.

My focus is to partner closely with DVMs to make sure we deliver on that promise — during the sales process and well after implementation — and to build a sales team grounded in trust, honest about where our solutions fit, and focused on long-term partnerships over transactions.

Pierre D’Amours, Director of Sales, Vetology

# # #

ABOUT VETOLOGY

Vetology is a veterinary diagnostic imaging support company that provides AI-generated screening reports and traditional teleradiology services by board-certified veterinary radiologists. Built by radiologists, Vetology focuses on improving patient outcomes through accuracy, speed, and reliability in diagnostic imaging. Our platform is designed to integrate seamlessly into existing hospital workflows, helping clinicians make informed decisions quickly.

Learn more at vetology.net.